New research published today in Science Advances reveals that the largest expansion of coral reefs in the past 100 million years happened about 20 to 10 million years ago, between Australia and Southeast Asia.

This vast reef system likely laid the foundations for the extraordinary diversity of marine life we see today.

Coral reefs are among the most diverse ecosystems on Earth. They support about a quarter of all marine species while covering less than 1% of the oceans. Yet scientists have long grappled with the question of how such immense diversity arose in the first place. Where did it begin, and what made it possible?

Our new study uncovers a turning point deep in Earth’s history – a time when reefs didn’t just grow, but expanded on a scale far beyond anything we see today. This expansion may have created the ecological space needed for modern coral reef life to flourish.

Coral reefs are major biodiversity hotspots. Ahmer Kalam/Unsplash

Coral reefs are major biodiversity hotspots. Ahmer Kalam/UnsplashAn enduring mystery

Biodiversity simply refers to the variety of life in a given place. On coral reefs, this diversity is staggering: thousands of species of fish, corals and other organisms coexist in tightly packed ecosystems.

However, despite decades of research, the origins of this richness have remained an enduring mystery.

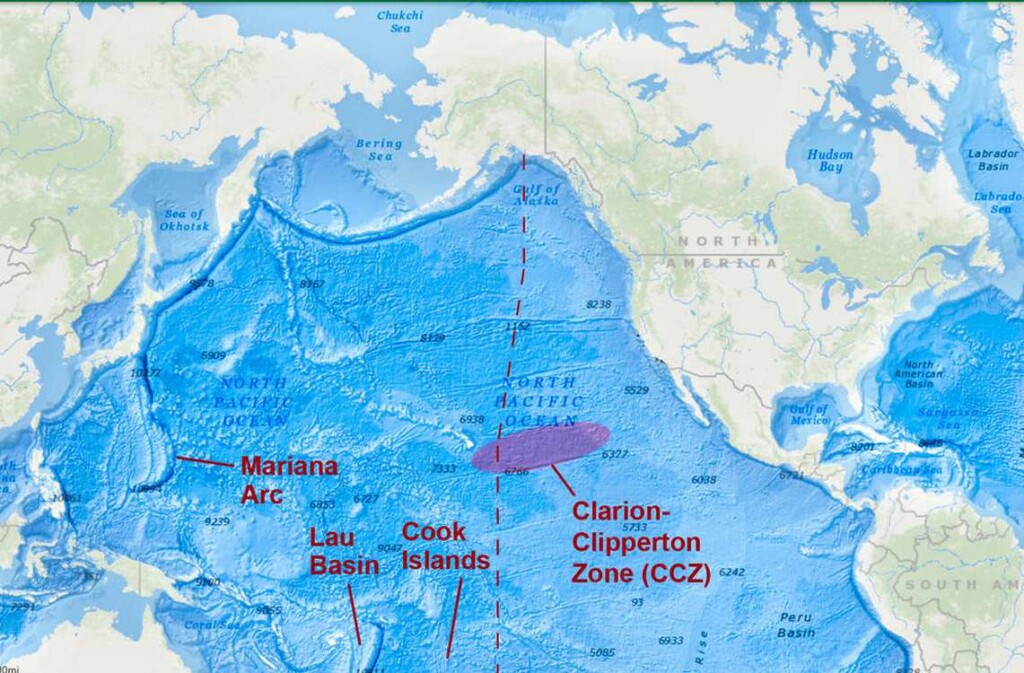

Our new study reveals that changes in environmental, biological and tectonic conditions about 20 million years ago promoted the dramatic expansion of coral reefs across a region stretching between Australia and Southeast Asia.

Today, this area is known as the Indo-Australian Archipelago. It’s recognised as a global hotspot of marine biodiversity, especially in an area called the Coral Triangle.

The expansion of reefs in this area coincided with the emergence of many familiar reef organisms, including plating corals and iconic fish groups like parrotfishes.

To uncover this, we combined evidence from geological records, fossils and genetic data. Together, these independent lines of evidence allowed us to pinpoint when and where modern reef biodiversity began to take shape, without relying on any single source alone.

Results suggest reef expansion itself played a crucial role in generating biodiversity. As reefs grew larger, they likely created new habitats and ecological opportunities, allowing species to evolve and diversify.

We have now named this ancient network of reefs the Great Indo-Australian Miocene Reef System. The large reefs in this system were mostly built by corals and crustose coralline algae, an essential group of algae for holding together reef structures. These reefs also provided very important habitat for fish groups that we see on coral reefs today, such as surgeonfishes and butterflyfishes.

Remnants of an epic reef

Surprisingly, the region where this expansion occurred is not where the largest reefs are found today. Instead, reefs off northwestern Australia – including Ashmore Reef, Scott Reef, and the Rowley Shoals – may be remnants of what was once one of the largest reef systems to have ever existed.

Previous geological work has shown this ancient west Australian barrier reef rivalled the extent of the present-day Great Barrier Reef. The new findings go further, suggesting individual reefs within this system may have been far larger than any modern reef.

However, there are still uncertainties. Reconstructing ecosystems from millions of years ago requires combining incomplete records. Some aspects of reef size and how these ecosystems connected remain difficult to resolve, as the geological record only contains the remnants of entire reef systems.

But the overall pattern is clear. A massive expansion of reefs about 20 million years ago coincided with the rise of modern marine diversity.

The message is also simple. To understand where biodiversity is today, we need to look deep into the past. The richest ecosystems on Earth may owe their origins to places that no longer appear exceptional – hidden chapters of Earth’s history that continue to shape life in our oceans.![]()

Coral reefs support thousands of species in a small area. Francesco Ungaro/Unsplash

Coral reefs support thousands of species in a small area. Francesco Ungaro/Unsplash

Alexandre Siqueira, ARC DECRA and Vice-Chancellor's Research Fellow, School of Science, Edith Cowan University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

_32075.jpg)

Fabiana Rizzi / Unsplash

Fabiana Rizzi / Unsplash

Male sylvan chameleon (Nadzikambia goodallae) from Mount Ribáuè, Mozambique. Krystal Tolley,

Male sylvan chameleon (Nadzikambia goodallae) from Mount Ribáuè, Mozambique. Krystal Tolley,