_32075.jpg)

Application lodged to build microreactor at US university

_32075.jpg)

High-salt diet linked to faster memory decline in men: Study

Human vision: what we actually see – and don’t see – tells us a lot about consciousness

Henry Taylor, University of Birmingham

What can you see right now? This might seem like a silly question, but what enters your consciousness is not the whole story when it comes to vision. A great deal of visual processing in the brain goes on well below our conscious awareness.

Some studies have probed the unconscious depths of vision. One source of evidence comes from the neurological condition known as blindsight, which is caused by damage to areas of the brain involved in processing visual information. People with blindsight report that they are unable to see, either entirely or in a portion of their visual field. However, when asked to guess what is there, they can often do so with remarkable accuracy.

For example, in an experiment published in 2004 on someone with blindsight, a black bar was displayed in the portion of the visual field to which the person was blind. The person was asked to “guess” whether the bar was vertical or horizontal.

Despite denying any conscious awareness of the bar, the participant could answer correctly at a level well above chance. The participant even showed evidence of being able to pay attention to the bar – they were faster to respond when an arrow (placed in a healthy area of their visual field) correctly indicated the location of the bar.

The most popular interpretation (though not the only one) is that people with blindsight can see these objects, but not see them consciously. They see what is there, but it all goes on unconsciously, below their awareness.

The phenomenon of inattentional blindness seems to show you can see without the information crossing into your consciousness. Anyone can experience inattentional blindness. The phenomenon has been known about for a long time, but we can most easily get a handle on it by looking at a well-known experiment reported in 1999.

In this experiment, participants are shown a video of people playing basketball, and told to count the number of passes between the players wearing a white shirt. If you’ve never done this before, I urge to you stop reading now and watch the video.

In many cases, people are so busy counting the passes that they completely miss a large gorilla walking across the middle of the scene and beating its chest, then walking off. The gorilla’s right there, in the centre of your visual field. Light from the gorilla enters your eyes, and is processed in the visual system, but somehow you missed it, because you weren’t paying attention to it.

The gorilla has more to teach us. In another experiment reported in 2013, radiologists were given a series of lung scans. They were told to look for nodules (which show up as small light coloured circles) on each scan. In one of the scans, a large picture of a dancing gorilla was superimposed on top of the lung scan. In this study, 83% of the radiologists failed to spot it, even though it was 48 times bigger than the average nodule they were looking for. Some of them even looked directly at the gorilla and still didn’t notice it!

The interpretation of these experiments is controversial. Some scientists suggest that in these kinds of cases, you consciously see the gorilla, but immediately forget it (although a dancing gorilla in someone’s lung doesn’t seem like the kind of thing you’d forget). Others argue that you see the gorilla, but the information never made its way into consciousness. You saw the gorilla, but unconsciously.

Let’s assume that in the case of blindsight, and inattentional blindness, the information is seen, but didn’t make it all the way to consciousness. Then, the question is: what makes some information conscious, rather than the information that stays unconscious? This is one of the central questions for consciousness studies in philosophy, psychology and neuroscience.

The brain’s loudspeaker

There’s no agreement on which is the best theory of consciousness, but in my opinion, the strongest contender is the global neuronal workspace theory.

According to this theory, consciousness is all to do with a particular area of the brain which is the seat of the “workspace”. The workspace is a system with a small capacity, so it can’t hold a lot of information at any one time. The job of the workspace is to take unconscious information and broadcast it to lots of different networks all across the brain. Global neuronal workspace theorists say that broadcasting the information in this way is what makes it conscious.

The job of the workspace is to act like the brain’s loudspeaker, and consciousness is the information that gets broadcast. The workspace takes unconscious information and boosts it so that many of the different systems in the brain hear about it and can use that information in their own processes. The late philosopher Daniel Dennett used to call consciousness “fame in the brain”. The workspace idea is similar.

One of the most striking implications of the global neuronal workspace theory is how little information makes it to consciousness. Since the workspace has quite a small capacity, it follows that we can only ever be conscious of a little at a time. We might think there’s a rich visual world in front of us, full of details, all of which we’re conscious of, but really – according to the theory – we’re only ever conscious of a small portion of that.

Some philosophers and scientists have objected to the theory on these grounds. They suggest that consciousness “overflows” the workspace: we are conscious of more information than can “fit” into the workspace at any one time. Even with these debates still ongoing, I think the global neuronal workspace theory gives us a reasonably clear answer to the question of what consciousness is for, and how it interacts with other systems in the brain.

In our brains, consciousness is only the tip of a very large iceberg. But the global neuronal workspace theory might give us insight into what makes that tip so special.![]()

Henry Taylor, Associate Professor, Department of Philosophy, University of Birmingham

This article is republished from The Conversation under a Creative Commons license. Read the original article.

The future remains bleak for corals – but not all reefs are doomed

A recent report on global tipping points warned that coral reefs face widespread dieback and have reached a point from which they cannot recover.

But in our new research, we show this might not be the case for some reefs if corals can gain tolerance to rising temperatures, or if we can cut greenhouse gas emissions and restore reefs with heat-tolerant corals at scale.

Nevertheless, the outlook likely remains bleak.

Coral reefs provide habitat for thousands of other species in tropical oceans. They deliver economic value through fisheries and tourism and provide shoreline protection from storm surges and extreme weather by dampening the impact of waves.

However, coral reefs are vulnerable to the effects of climate change. Our study combines previously published assessments of climate impacts on different coral reefs and reviews the scientific consensus to examine how long reef structures could persist as climate change intensifies.

Ocean warming, acidification, darkening and deoxygenation all threaten the persistence of coral reefs. Ocean warming brings marine heatwaves, which are the leading cause of mass coral bleaching that has led to a global decline in coral cover.

Marine heatwaves have already led to a global decline in coral reefs. Christopher Cornwall, CC BY-NC-ND

Marine heatwaves have already led to a global decline in coral reefs. Christopher Cornwall, CC BY-NC-NDCorals are animals that house microalgae within their tissues that provide sugar in exchange for nitrogen. When temperatures become too hot, corals expel these symbiotic microalgae, leaving behind white skeletons.

Ocean acidification reduces the ability of corals to build their skeletons through a process called calcification. Warming, darkening and deoxygenation can also reduce calcification.

When corals expel their symbiotic algae, all that remains are bleached skeletons. Chris Perry, CC BY-NC-ND

When corals expel their symbiotic algae, all that remains are bleached skeletons. Chris Perry, CC BY-NC-NDCoral reefs are built by adding calcium carbonate, coming mostly from corals but also coralline algae and other calcareous seaweeds. But as the ocean’s pH (a measure of acidity) is reduced, processes called bio-erosion and dissolution act to remove calcium carbonate.

Our meta-analysis examined how climate change affects the calcification and bio-erosion of coral reefs and we then applied these results to a global data set of reef growth.

There is no scientific consensus on which organisms will build future coral reefs. We explore four most likely scenarios:

1. Present-day extreme reefs represent the future of coral reefs. These are locations where temperatures are already warmer, waters are becoming more acidic and oxygen has dropped to conditions similar to those expected at the end of the century. These reefs are dominated by coralline algae and slow-growing heat-resistant corals.

Some reefs already experience conditions expected at the end of the century. Steeve Comeau, CC BY-NC-ND

Some reefs already experience conditions expected at the end of the century. Steeve Comeau, CC BY-NC-ND2. Presently degraded reefs take over future reefs. These reefs are dominated by bio-eroders such as sponges and sea urchins and have low coral cover.

3. Corals can gain heat tolerance to an extent that keeps pace with low to moderate greenhouse gas emissions scenarios. Under these scenarios, only about 36% of global corals would be lost and there would be a moderate reduction in growth. These heat-tolerant reefs are dominated by faster growing corals with symbiotic microalgae that can evolve heat tolerance.

4. Reefs where restoration practices include using heat-tolerant corals that can then disperse to other regions. These restored reefs would have lower coral cover in remote regions lacking restoration or with unsuccessful restoration practices. This kind of reef restoration would need to cover half of global coral reefs to maintain net growth – an unlikely scenario.

We found coral reefs transition to net erosion under all scenarios, even under low to moderate greenhouse gas emissions, meaning they are dissolving or being eaten faster than they can grow. Only reefs with heat-tolerant corals could prevent this from occurring.

The next step for the scientific community is to determine which reefs can persist in the future using global efforts to combine information. The major issues is that we are missing measurements from large parts of the Pacific, and we do not know how deoxygenation or coastal darkening will impact coral reefs. The processes of reef bioerosion and dissolution are also poorly described.

Although the climate has been altered to the point of threatening the future survival of coral reefs, their fate is not doomed yet if we act now.

Another question is how long reef structures will persist after living corals are removed. We do not have an answer yet. It will take global efforts to rapidly obtain these measurements to better manage and protect coral reefs before climate change intensifies.

It is up to governments everywhere, including New Zealand, to better support these initiatives before it is too late.![]()

Christopher Cornwall, Lecturer in Marine Biology, Te Herenga Waka — Victoria University of Wellington and Orlando Timmerman, Doctoral Candidate in Earth Sciences, University of Cambridge

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Microbes in Antarctica survive the freezing and dark winter by living on air

Ry Holland, Monash University

Winter in Antarctica is long and dark. Temperatures remain well below freezing. In many places, the Sun sets in April and does not rise above the horizon again until August. Without sunlight, photosynthetic life such as plants, mosses and algae cannot make energy.

But that’s not to say all life stops.

In a new study published in The ISME Journal, my colleagues and I show that Antarctic microbes make energy from the air at temperatures as low as –20°C. This finding improves our understanding of how life survives at temperature extremes in Antarctica – and how climate change will affect this important process.

How to make energy from air

In 2017, scientists showed that a large number of Antarctic microbes can generate energy from atmospheric gases present at very low concentrations.

This process is called “aerotrophy”. By using enzymes that are very finely tuned to “sniff out” the hydrogen and carbon monoxide in the atmosphere, these microbes have found a way to make energy from the air itself – a huge advantage in Antarctica’s nutrient-poor desert soils.

What remained unknown until now was the temperature limits of this process. Could aerotrophy be a way to power the continent’s soil communities through the winter?

Measuring how quickly these microbes consume such a small amount of fuel can be difficult.

From 2022–24, we collected surface soil samples from different areas across East Antarctica and analysed them in our lab.

We measured how quickly they can use the atmospheric gases. We also extracted all the DNA from the soil microbes and sequenced it. This tells us what microbes are present, what genes they have, and what they are capable of using as energy sources.

We showed aerotrophy happening in the lab at representative summer (4°C) and winter (–20°C) temperatures. This means hydrogen and carbon monoxide are a viable food source not just over the summer months, but year-round. What was even more surprising though, was the upper temperature limit.

Soil temperatures in Antarctica rarely rise above 20°C. Yet we found microbes in these soils that continued to generate energy from hydrogen up to a staggering 75°C. It seems as though microbes in Antarctic soils are well adapted to the continent’s cold temperatures, but not restricted to them. It’s a bit like seeing a penguin thrive in a tropical jungle.

We also wanted to see this process occurring in Antarctica itself, so two years ago we brought the lab down south. We collected fresh soil samples, sealed them in the glass vials, and took gas samples.

For the first time, it was clear that under real-world conditions these soil microbes were still munching their way through hydrogen.

DNA sequencing has showed us that the vast majority of microbes in Antarctic soils encode the genes to gain energy from hydrogen. Many of these bacteria also have genes to take carbon from the atmosphere.

These aerotrophs are “primary producers”, generating new biomass from the air itself.

In most land-based ecosystems, photosynthesis is thought to be the bottom of the food chain. Photosynthesis takes energy from sunlight and carbon from the atmosphere and turns it into yummy organic compounds.

It’s what makes plants grow. Plants are primary producers that are eaten by herbivores, which are then eaten by carnivores.

In Antarctica’s desert soils, photosynthesis is relatively rare. Instead, we hypothesise that aerotrophy fulfils the primary producer role in many places.

This makes sense because, unlike sunlight-dependent photosythesis, we now know that aerotrophy can happen year-round. Another benefit is that it doesn’t require liquid water, whereas photosynthesis does.

Aerotrophy clearly has an important role in Antarctic ecosystems. So next, we wanted to determine how global warming might affect this process.

Under low-emissions scenarios, we predict a 4% increase in how quickly aerotrophs use atmospheric hydrogen. Under very high-emissions scenarios, this increase rises to 35%. The numbers are similar for carbon monoxide.

Although hydrogen isn’t a greenhouse gas itself, it is important because it affects how long some greenhouse gases, including methane, hang around in the atmosphere.

Soils (including the microbes that live in them) are responsible for 82% of all hydrogen consumed on Earth globally. In other words, they are a hydrogen sink. This is a crucial component in the global hydrogen cycle.

There are a lot of factors that determine how microorganisms will respond to climate change. Temperature is just one of them. This study is an important piece of the puzzle as scientists figure out how resilient Antarctica’s unique microbal ecosystems are.![]()

Ry Holland, Research Fellow in Microbial Ecology, Monash University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Why your brain has to work harder in an open-plan office than private offices: study

Since the pandemic, offices around the world have quietly shrunk. Many organisations don’t need as much floor space or as many desks, given many staff now do a mix of hybrid work from home and the office.

But on days when more staff are required to be in, office spaces can feel noticeably busier and noisier. Despite so much focus on getting workers back into offices, there has been far less focus on the impacts of returning to open-plan workspaces.

Now, more research confirms what many suspected: our brains have to work harder in open-plan spaces than in private offices.

What the latest study tested

In a recently published study, researchers at a Spanish university fitted 26 people, aged in their mid-20s to mid-60s, with wireless electroencephalogram (EEG) headsets. EEG testing can measure how hard the brain is working by tracking electrical activity through sensors on the scalp.

Participants completed simulated office tasks, such as monitoring notifications, reading and responding to emails, and memorising and recalling lists of words.

Each participant was monitored while completing the tasks in two different settings: an open-plan workspace with colleagues nearby, and a small enclosed work “pod” with clear glazed panels on one side.

The researchers focused on the frontal regions of the brain, responsible for attention, concentration, and filtering out distractions. They measured different types of brain waves.

As neuroscientist Susan Hillier explains in more detail, different brain waves reveal distinct mental states:

- “gamma” is linked with states or tasks that require more focused concentration

- “beta” is linked with higher anxiety and more active states, with attention often directed externally

- “alpha” is linked with being very relaxed, and passive attention (such as listening quietly but not engaging)

- “theta” is linked with deep relaxation and inward focus

- and “delta” is linked with deep sleep.

The Spanish study found that the same tasks done inside the enclosed pod vs the open-plan workspace produced completely opposite patterns.

It takes effort to filter out distractions

In the work pod, the study found beta waves – associated with active mental processing – dropped significantly over the experiment, as did alpha waves linked to passive attention and overall activity in the frontal brain regions.

This meant people’s brains needed progressively less effort to sustain the same work.

The open-plan office testing showed the reverse.

Gamma waves, linked to complex mental processing, climbed steadily. Theta waves, which track both working memory and mental fatigue, increased. Two key measures also rose significantly: arousal (how alert and activated the brain is) and engagement (how much mental effort is being applied).

In other words, in the open-plan office participants’ brains had to work harder to maintain performance.

Even when we try to ignore distractions, our brain has to expend mental effort to filter them out.

In contrast, the pod eliminated most background noise and visual disruptions, allowing participant’s brains to work more efficiently.

Researchers also found much wider variability in the open office. Some people’s brain activity increased dramatically, while others showed modest changes. This suggests individual differences in how distracting we find open-plan spaces.

With only 26 participants, this was a relatively small study. But its findings echo a significant body of research from the past decade.

What past research has shown

In our 2021 study, my colleagues and I found a significant causal relationship between open-plan office noise and physiological stress. Studying 43 participants in controlled conditions – using heart rate, skin conductivity and AI facial emotion recognition – we found negative mood in open plan offices increased by 25% and physiological stress by 34%.

Another study showed background conversations and noisy environments can degrade cognitive task performance and increase distraction for workers.

And a 2013 analysis of more than 42,000 office workers in the United States, Finland, Canada and Australia found those in open-plan offices were less satisfied with their work environment than those in private offices. This was largely due to increased, uncontrollable noise and lack of privacy.

Just as we now recognise poorly designed chairs cause physical strain, years of research has shown how workspace design can result in cognitive strain.

What to do about it

The ability to focus and concentrate without interruption and distraction is a fundamental requirement for modern knowledge work.

Yet the value of uninterrupted work continues to be undervalued in workplace design.

Creating zones where workers can match their workplace environment to the task is essential.

Responding to having more staff doing hybrid work post-pandemic, LinkedIn redesigned its flagship San Francisco office. LinkedIn halved the number of workstations in open plan areas, instead experimenting with 75 types of work settings, including work areas for quiet focus.

For organisations looking to look after their workers’ brains, there are practical measures to consider. These include setting up different work zones, acoustic treatments and sound-masking technologies, and thoughtfully placed partitions to reduce visual and auditory distractions.

While adding those extra features in may cost more upfront than an open plan office, they can be worth it. Research has shown the significant hidden toll of poor office design on productivity, health and employee retention.

Providing workers with more choice in how much they’re exposed to noise and other interruptions is not a luxury. To get more done, with less strain on our brains, better design at work should be seen as a necessity.![]()

Libby (Elizabeth) Sander, MBA Director & Associate Professor of Organisational Behaviour, Bond Business School, Bond University

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Deep-sea fish larvae rewrite the rules of how eyes can be built

Fabio Cortesi, The University of Queensland and Lily Fogg, University of Helsinki

The deep sea is cold, dark and under immense pressure. Yet life has found a way to prevail there, in the form of some of Earth’s strangest creatures.

Since deep-sea critters have adapted to near darkness, their eyes are particularly unique – pitch-black and fearsome in dragonfish, enormous in giant squid, barrel-shaped in telescope fish. This helps them catch the remaining rays of sunlight penetrating to depth and see the faint glow of bioluminescence.

Deep-sea fishes, however, typically start life in shallower waters in the twilight zone of the ocean (roughly 50–200 metres deep). This is a safe refuge to feed on plankton and grow while avoiding becoming a snack for larger predators.

Our new study, published in Science Advances, shows deep-sea fish larvae have evolved a unique way to maximise their vision in this dusky environment – a finding that challenges scientific understanding of vertebrate vision.

The nightmare of seeing in the twilight zone

The vertebrate retina, located at the back of the eye, has two main types of light-sensitive photoreceptor cells: rod-shaped for dim light and cone-shaped for bright light.

The rods and cones slowly change position inside the retina when moving between dim and bright conditions, which is why you temporarily go blind when you flick on the light switch on your way to the bathroom at night.

While vertebrates that are active during the daytime and predominantly inhabit bright light environments favour cone-dominated vision, animals that live in dim conditions, such as the deep sea or caves, have lost or reduced their cone cells in favour of more rods.

However, vision in twilight is a bit of a nightmare – neither rods nor cones are working at their best. This raises the question of how some animals, such as larval deep-sea fishes, can overcome the limitations of the cone-and-rod retina not only to survive but even to thrive in twilight conditions.

To understand how newly born deep-sea fishes see, we had to start where they do: in the twilight zone of the ocean.

We caught larval fish from the Red Sea using fine-meshed nets towed from near the surface to a depth of around 200m. This way we got hold of three different species – the lightfish (Vinciguerria mabahiss) and the hatchetfish (Maurolicus mucronatus), both members of the dragonfishes, and a member of the lanternfishes, the skinnycheek lanternfish (Benthosema pterotum). Next, we studied what their photoreceptor cells looked like on the outside and how they were wired on the inside.

First, we used high-resolution microscopy to examine the cells’ shape in great detail. Then we investigated retinal gene expression to identify which vision genes were activated as the fish grew. Finally, we got some experts in computational modelling of visual proteins on board to simulate which wavelengths of light these tiny fishes may perceive.

By combining all the approaches, we were able to piece together a picture of how these animals see their world. This sounds relatively simple, but working with deep-sea fishes is anything but easy.

While these animals are generally thought of as monsters of the deep, in reality, most reach only about the size of a thumb – even when fully grown. They are also very fragile and difficult to get.

Working with larval specimens that are only a few millimetres long is even more difficult. However, by leveraging support from the deep-sea research community, we were fortunate enough to combine specimens from multiple research expeditions to piece together an unusually complete picture of visual development in these elusive animals.

So, what did we discover?

For decades, scientists have thought that, as vertebrates grow, the development of their retina follows a predictable pattern: cones form first, then rods. But the deep-sea fish we studied do not follow this rule.

We found that, as larvae, they mostly use a mix-and-match type of hybrid photoreceptor. The cells they are using early on look like rods but use the molecular machinery of cones, making them rod-like cones.

In some of the species we studied, these hybrid cells were a temporary solution, replaced by “normal” rods as the fish grew and migrated into deeper, darker waters.

However, in the hatchetfish, which spends its whole life in twilight, the adults keep their rod-like cone cells throughout life, essentially building their entire visual system around this extra type of cell.

Our research shows this is not a minor tweak to the system. Instead, it represents a fundamentally different developmental pathway for vertebrate vision.

Biology doesn’t fit into neat boxes

So why bother with these hybrid cells?

It seems that to overcome the visual limitations of the twilight zone, rod-like cones offer the best of both worlds: the light-capturing ability of rods combined with the faster, less bright-light sensitive properties of cones. For a tiny fish trying to survive in the murky midwater, this could mean the difference between spotting dinner or becoming it.

For more than a century, biology textbooks have taught that vertebrate vision is built from two clearly defined cell types. Our findings show these tidy categories are much more blurred.

Deep-sea fish larvae combine features of both rods and cones into a single, highly specialised cell optimised for life in between light and darkness. In the murky depths of the ocean, deep-sea fish larvae have quietly rewritten the rules of how eyes can be built, and in doing so, remind us that biology rarely fits into neat boxes.![]()

Fabio Cortesi, ARC Future Fellow, Faculty of Science, The University of Queensland and Lily Fogg, Postdoctoral Researcher, Helsinki Institute of Life Science, University of Helsinki

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Highly Fatal Virus May Finally Be Treatable with First Vaccine–Clinical Trials Starting

Red flowers have a ‘magic trait’ to attract birds and keep bees away

Joshua J. Cotten

Adrian Dyer, Monash University and Klaus Lunau, Heinrich Heine Universität Düsseldorf

Joshua J. Cotten

Adrian Dyer, Monash University and Klaus Lunau, Heinrich Heine Universität DüsseldorfFor flowering plants, reproduction is a question of the birds and the bees. Attracting the right pollinator can be a matter of survival – and new research shows how flowers do it is more intriguing than anyone realised, and might even involve a little bit of magic.

In our new paper, published in Current Biology, we discuss how a single “magic” trait of some flowering plants simultaneously camouflages them from bees and makes them stand out brightly to birds.

How animals see

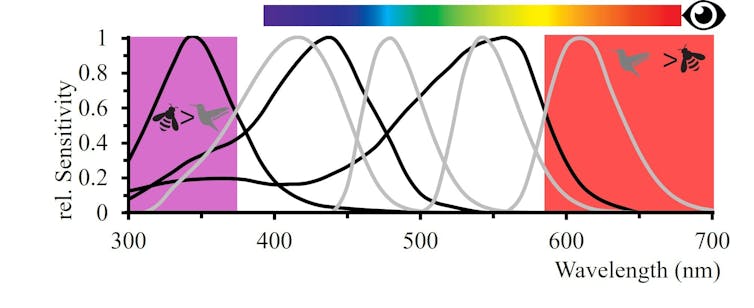

We humans typically have three types of light receptors in our eyes, which enable our rich sense of colours.

These are cells sensitive to blue, green or red light. From the input from these cells, the brain generates many colours including yellow via what is called colour opponent processing.

The way colour opponent processing works is that different sensed colours are processed by the brain in opposition. For example, we see some signals as red and some as green – but never a colour in between.

Many other animals also see colour and show evidence of also using opponent processing.

Bees see their world using cells that sense ultraviolet, blue and green light, while birds have a fourth type sensitive to red light as well.

Our colour perception illustrated with the spectral bar is different to bees that are sensitive to UV, blue and green, or birds with four colour photoreceptors including red sensitivity. Adrian Dyer & Klaus Lunau, CC BY

Our colour perception illustrated with the spectral bar is different to bees that are sensitive to UV, blue and green, or birds with four colour photoreceptors including red sensitivity. Adrian Dyer & Klaus Lunau, CC BYThe problem flowering plants face

So what do these differences in colour vision have to do with plants, genetics and magic?

Flowers need to attract pollinators of the right size, so their pollen ends up on the correct part of an animal’s body so it’s efficiently flown to another flower to enable pollination.

Accordingly, birds tend to visit larger flowers. These flowers in turn need to provide large volumes of nectar for the hungry foragers.

But when large amounts of sweet-tasting nectar are on offer, there’s a risk bees will come along to feast on it – and in the process, collect valuable pollen. And this is a problem because bees are not the right size to efficiently transfer pollen between larger flowers.

Flowers “signal” to pollinators with bright colours and patterns – but these plants need a signal that will attract birds without drawing the attention of bees.

We know bee pollination and flower signalling evolved before bird pollination. So how could plants efficiently make the change to being pollinated by birds, which enables the transfer of pollen over long distances?

Avoiding bees or attracting birds?

A walk through nature lets us see with our own eyes that most red flowers are visited by birds, rather than bees. So bird-pollinated flowers have successfully made the transition. Two different theories have been developed that may explain what we observe.

One theory is the bee avoidance hypotheses where bird pollinated flowers just use a colour that is hard for bees to see.

A second theory is that birds might prefer red.

But neither of these theories seemed complete, as inexperienced birds don’t demonstrate a preference for a stronger red hue. However, bird-pollinated flowers do have a very distinct red hue, which suggests avoiding bees can’t solely explain why consistently salient red flower colours evolved.

A magical solution

In evolutionary science, the term magic trait refers to an evolved solution where one genetic modification may yield fitness benefits in multiple ways.

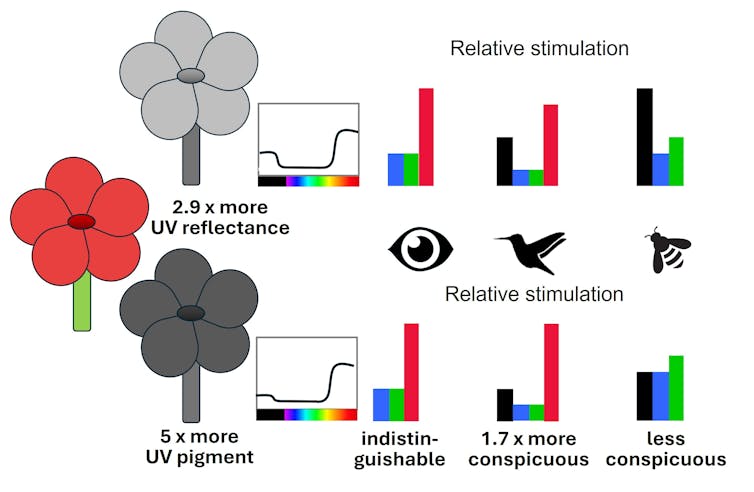

Earlier this month, a team working on how this might apply to flowering plants showed that a gene that modulates UV-absorbing pigments in flower petals can indeed have multiple benefits. This is because of how bees and birds view colour signals differently.

Bee-pollinated flowers come in a diverse range of colours. Bees even pollinate some plants with red flowers. But these flowers tend to also reflect a lot of UV, which helps bees find them.

The magic gene has the effect of reducing the amount of UV light reflected from the petal, making flowers harder for bees to see. But (and this is where the magic comes in) reducing UV reflection from a petal of a red flower simultaneously makes it look redder for animals – such as birds – which are believed to have a colour opponent system.

Red flowers look similar for humans, but as flowers evolved for bird vision a genetic change down-regulates UV reflection, making flowers more colourful for birds and less visible to bees. Adrian Dyer & Klaus Lunau, CC BY

Red flowers look similar for humans, but as flowers evolved for bird vision a genetic change down-regulates UV reflection, making flowers more colourful for birds and less visible to bees. Adrian Dyer & Klaus Lunau, CC BYBirds that visit these bright red flowers gain rewards – and with experience, they learn to go repeatedly to the red flowers.

One small gene change for colour signalling in the UV yields multiple beneficial outcomes by avoiding bees and displaying enhanced colours to entice multiple visits from birds.

We lucky humans are fortunate that our red perception can also see the result of this clever little trick of nature to produce beautiful red flower colours. So on your next walk on a nice day, take a minute to view one of nature’s great experiments on finding a clever solution to a complex problem.![]()

Adrian Dyer, Associate Professor, Department of Physiology, Monash University and Klaus Lunau, Professor, Institute of Sensory Ecology, Heinrich Heine Universität Düsseldorf

This article is republished from The Conversation under a Creative Commons license. Read the original article.

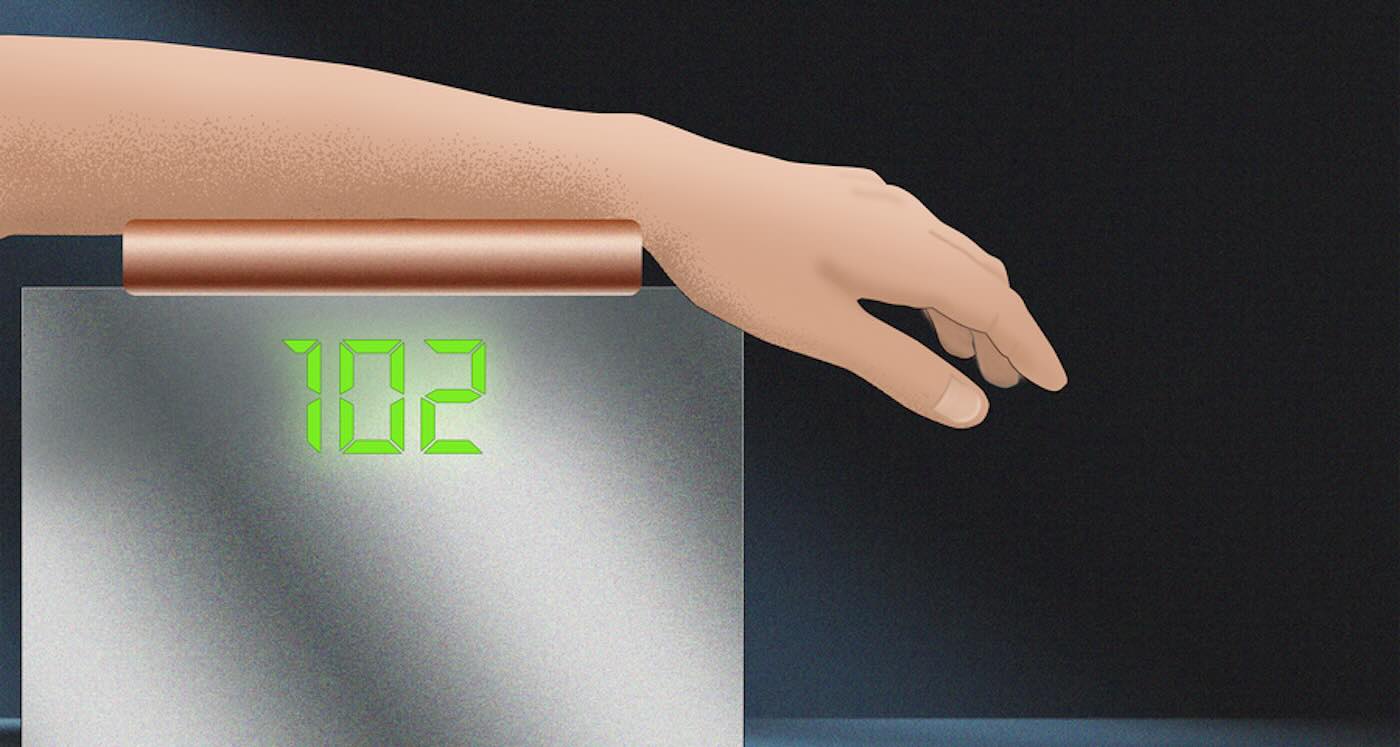

Simply Shining Light on Skin Can Replace Finger Pricks for People With Diabetes

Blood-glucose monitor uses light to spare diabetes patients from finger pricks – Credit: Christine Daniloff / MIT

Blood-glucose monitor uses light to spare diabetes patients from finger pricks – Credit: Christine Daniloff / MITPolar bears are adapting to climate change at a genetic level – and it could help them avoid extinction

But in our new study my colleagues and I found that the changing climate was driving changes in the polar bear genome, potentially allowing them to more readily adapt to warmer habitats. Provided these polar bears can source enough food and breeding partners, this suggests they may potentially survive these new challenging climates.

We discovered a strong link between rising temperatures in south-east Greenland and changes in polar bear DNA. DNA is the instruction book inside every cell, guiding how an organism grows and develops. In processes called transcription and translation, DNA is copied to generate RNA (molecules that reflect gene activity) and can lead to the production of proteins, and copies of transposons (TEs), also known as “jumping genes”, which are mobile pieces of the genome that can move around and influence how other genes work.

In carrying out our recent research we found that there were big differences in the temperatures observed in the north-east, compared with the south-east regions of Greenland. Our team used publicly available polar bear genetic data from a research group at the University of Washington, US, to support our study. This dataset was generated from blood samples collected from polar bears in both northern and south-eastern Greenland.

Our work built on the Washington University study which discovered that this south-eastern population of Greenland polar bears was genetically different to the north-eastern population. South-east bears had migrated from the north and became isolated and separate approximately 200 years ago, it found.

Researchers from Washington had extracted RNA from polar bear blood samples and sequenced it. We used this RNA sequencing to look at RNA expression — the molecules that act like messengers, showing which genes are active, in relation to the climate. This gave us a detailed picture of gene activity, including the behaviour of TEs. Temperatures in Greenland have been closely monitored and recorded by the Danish Meteorological Institute. So we linked this climate data with the RNA data to explore how environmental changes may be influencing polar bear biology.

Does temperature change anything?

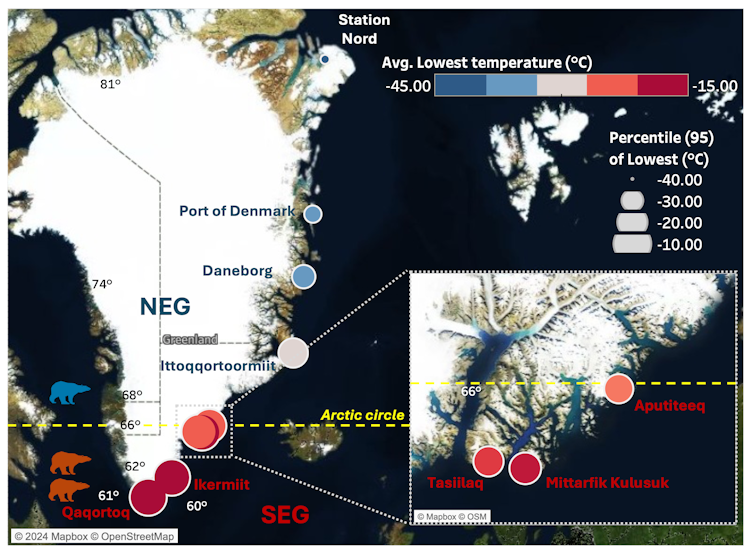

From our analysis we found that temperatures in the north-east of Greenland were colder and less variable, while south-east temperatures fluctuated and were significantly warmer. The figure below shows our data as well as how temperature varies across Greenland, with warmer and more volatile conditions in the south-east. This creates many challenges and changes to the habitats for the polar bears living in these regions.

In the south-east of Greenland, the ice-sheet margin, which is the edge of the ice sheet and spans 80% of Greenland, is rapidly receding, causing vast ice and habitat loss.

The loss of ice is a substantial problem for the polar bears, as this reduces the availability of hunting platforms to catch seals, leading to isolation and food scarcity. The north-east of Greenland is a vast, flat Arctic tundra, while south-east Greenland is covered by forest tundra (the transitional zone between coniferous forest and Arctic tundra). The south-east climate has high levels of rain, wind, and steep coastal mountains.

Temperature across Greenland and bear locations

Author data visualisation using temperature data from the Danish Meteorological Institute. Locations of bears in south-east (red icons) and north-east (blue icons). CC BY-NC-ND

Author data visualisation using temperature data from the Danish Meteorological Institute. Locations of bears in south-east (red icons) and north-east (blue icons). CC BY-NC-NDHow climate is changing polar bear DNA

Over time the DNA sequence can slowly change and evolve, but environmental stress, such as warmer climate, can accelerate this process.

TEs are like puzzle pieces that can rearrange themselves, sometimes helping animals adapt to new environments. In the polar bear genome approximately 38.1% of the genome is made up of TEs. TEs come in many different families and have slightly different behaviours, but in essence they all are mobile fragments that can reinsert randomly anywhere in the genome.

In the human genome, 45% is comprised of TEs and in plants it can be over 70%. There are small protective molecules called piwi-interacting RNAs (piRNAs) that can silence the activity of TEs.

Despite this, when an environmental stress is too strong, these protective piRNAs cannot keep up with the invasive actions of TEs. In our work we found that the warmer south-east climate led to a mass mobilisation from these TEs across the polar bear genome, changing its sequence. We also found that these TE sequences appeared younger and more abundant in the south-east bears, with over 1,500 of them “upregulated”, which suggests recent genetic changes that may help bears adapt to rising temperatures.

Some of these elements overlap with genes linked to stress responses and metabolism, hinting at a possible role in coping with climate change. By studying these jumping genes, we uncovered how the polar bear genome adapts and responds, in the shorter term, to environmental stress and warmer climates.

Our research found that some genes linked to heat-stress, ageing and metabolism are behaving differently in the south-east population of polar bears. This suggests they might be adjusting to their warmer conditions. Additionally, we found active jumping genes in parts of the genome that are involved in areas tied to fat processing – important when food is scarce. This could mean that polar bears in the south-east are slowly adapting to eating the rougher plant-based diets that can be found in the warmer regions. Northern populations of bears eat mainly fatty seals.

Overall, climate change is reshaping polar bear habitats, leading to genetic changes, with south-eastern bears evolving to survive these new terrains and diets. Future research could include other polar bear populations living in challenging climates. Understanding these genetic changes help researchers see how polar bears might survive in a warming world – and which populations are most at risk.

Don’t have time to read about climate change as much as you’d like?

Get a weekly roundup in your inbox instead. Every Wednesday, The Conversation’s environment editor writes Imagine, a short email that goes a little deeper into just one climate issue. Join the 47,000+ readers who’ve subscribed so far.![]()

Alice Godden, Senior Research Associate, School of Biological Sciences, University of East Anglia

This article is republished from The Conversation under a Creative Commons license. Read the original article.

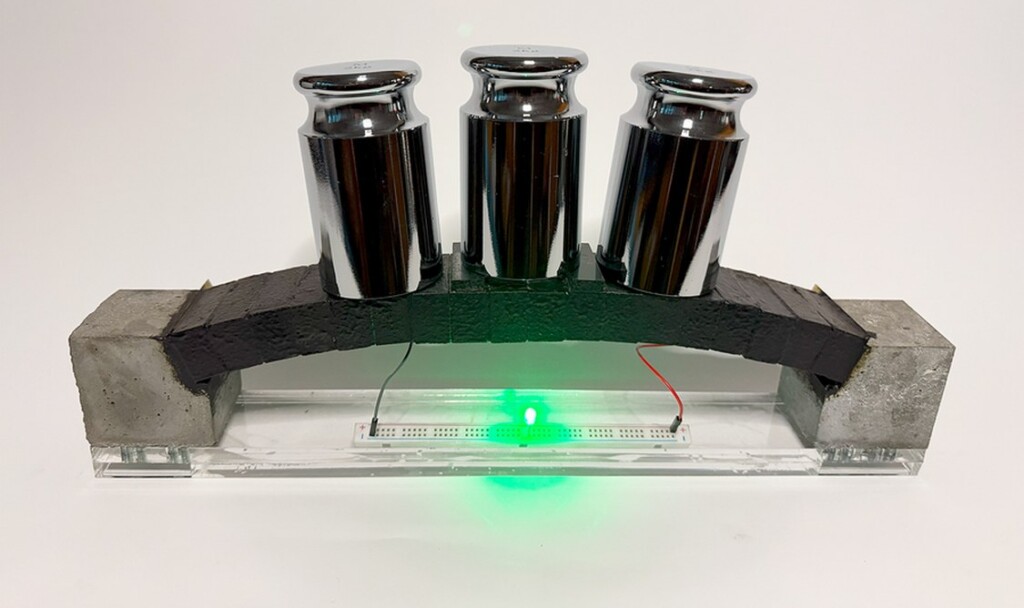

Cement Supercapacitors Could Turn the Concrete Around Us into Massive Energy Storage Systems

credit – MIT Sustainable Concrete Lab

credit – MIT Sustainable Concrete LabIndian researchers develop smart portable device to detect toxic pesticides in water, food

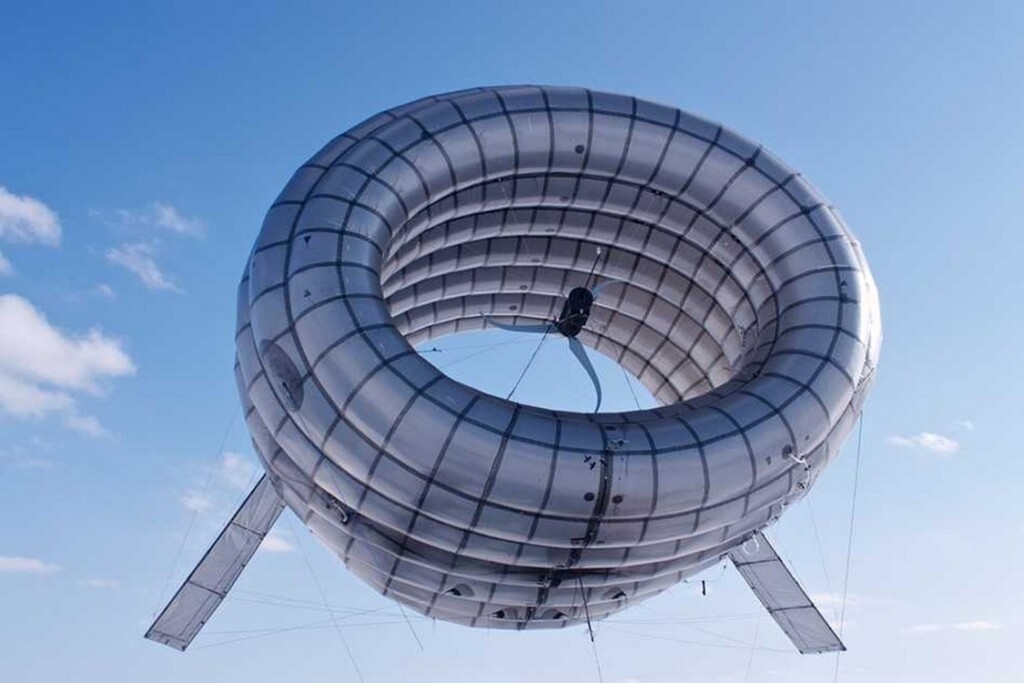

New Airship-style Wind Turbine Can Find Gusts at Higher Altitudes for Constant, Cheaper Power

Altaeros’ BAT – credit, Altaeros, via MIT

Altaeros’ BAT – credit, Altaeros, via MITBiodegradable Plastic Made from Bamboo Is Stronger and Easy to Recycle

Bamboo forest – credit Bady Abbas, via Unsplash

Bamboo forest – credit Bady Abbas, via Unsplash

Most red flowers are visited by birds, rather than bees.

Most red flowers are visited by birds, rather than bees.